GPT-4

🔗 a link post linking to openai.com

The latest update to Open AI’s preeminent model seems very impressive. It’s better at exams and it can now take images as input, along with text prompts.

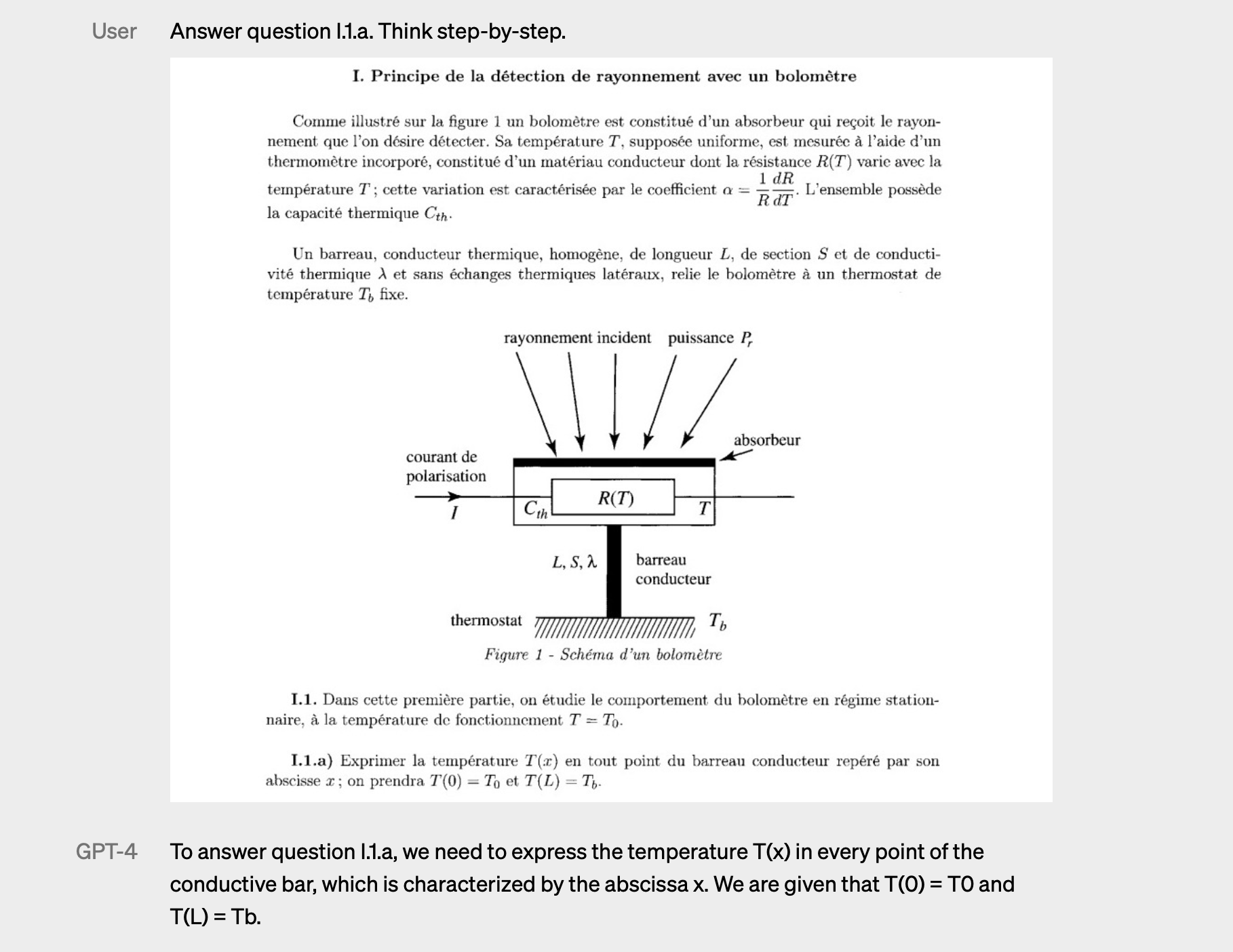

Up until now, I haven’t felt completely mystified about ChatGPT, and what it’s doing. It has been easy to tell myself “it’s just choosing the next word using a very fancy weighted decision network,” until I saw this example:

It’s given a scan of a page in French, with a setup, a diagram, and then two questions. The text prompt provided asks it to answer one of those questions from the scan. They didn’t tell it what the question was in the text prompt, they only provided “Answer question I.1.a.”

This feels like a slight-of-hand magic trick which I can’t figure out. It doesn’t feel like it’s just predicting the next word, even if that is true. It feels like we are in for some big changes in the next year, the next five years.

When large multimodal models can ace any exam, when they can offer very good advice for most problems a person might face, the definition of expertise will need to change.

Would you want to hire a human who got a B throughout their university time and is a “expert”, or would you want to hire a human who didn’t go to university and has a large model sidekick to guide them? Who would really be the expert? Even if the “traditional expert” also had a large model sidekick to help them, how much more of an expert are they really? Will the delta be that large?

Expertise might end up being not that important. Instead, it feels like what will be important will be things which are: manual.1

Of or pertaining to the hand

Performed by a person using physical as contrasted with mental effort

“Mental effort” might be a lot less important soon. “Manual effort” will be special, and maybe more rare.

When photography came onto the scene, people didn’t 100% stop painting. It became special, and rarer. Most people today take selfies, or maybe they hire a portrait photographer, they rarely hire a portrait painter.

Large multimodal models feel like nascent “photography for mental effort.”

Tom Scott made a related video a month ago, primarily asking the question “where are we on the S curve for AI tech?”

It certainly feels like we are at the start of S curve today.

I often consult Webster’s Unabridged 1913 Dictionary – it always helps me think more broadly and clearly. ↩︎